Cross-Cloud AI Training: The Technical Reality Behind Seamless Migration

Cross-Cloud AI Training: The Technical Reality Behind Seamless Migration

Migrating AI training workloads between cloud providers isn't science fiction anymore. Teams now run hundreds of clusters for batch processing, distributed training, and inference. Multi-cluster scheduling became critical. But the technical challenges of making this work reliably are far more complex than most organizations realize.

The Migration Challenge Nobody Talks About

When you're training a large language model that costs millions and takes weeks to complete, moving it mid-process feels like performing surgery on a moving aircraft. For training the OPT 175 billion parameter model, over 100 hardware failures were experienced over the course of a 2 months-long training job using 992 GPUs, averaging approximately 2 errors per day.

The problem isn't just technical complexity. It's that a training run interrupted by hardware failure mid-epoch wastes compute hours and can corrupt checkpoints. Infrastructure SLA is a direct input to project timeline reliability. Traditional cloud migration assumes you can pause, move, and resume. AI training doesn't work that way.

Fault-Tolerant Checkpointing: Your Safety Net

Checkpointing isn't optional for cross-cloud training. It's the difference between losing hours of work and continuing where you left off. Checkpointing saves model states periodically, allowing training to resume from the last saved point rather than starting over.

The challenge is making checkpoints efficient enough that they don't slow down training. JIT checkpointing has negligible steady state overhead while all three periodic checkpointing approaches suffer from increasing overheads as the model size increases. For practical cross-cloud migration, you need checkpointing systems that can:

- Save state in under 30 seconds without interrupting GPU computation

- Store checkpoints in cloud-agnostic formats accessible from any provider

- Handle partial failures where some nodes migrate successfully and others don't

- Verify checkpoint integrity before committing to a migration

Memory-efficient attention technique reduced the memory usage of the attention layers by 60%, while gradient checkpointing (which recomputes activations during the backward pass) reduced overall memory usage by 45%. These optimizations matter because they determine how quickly you can create portable checkpoints.

Data Transfer: The Hidden Bottleneck

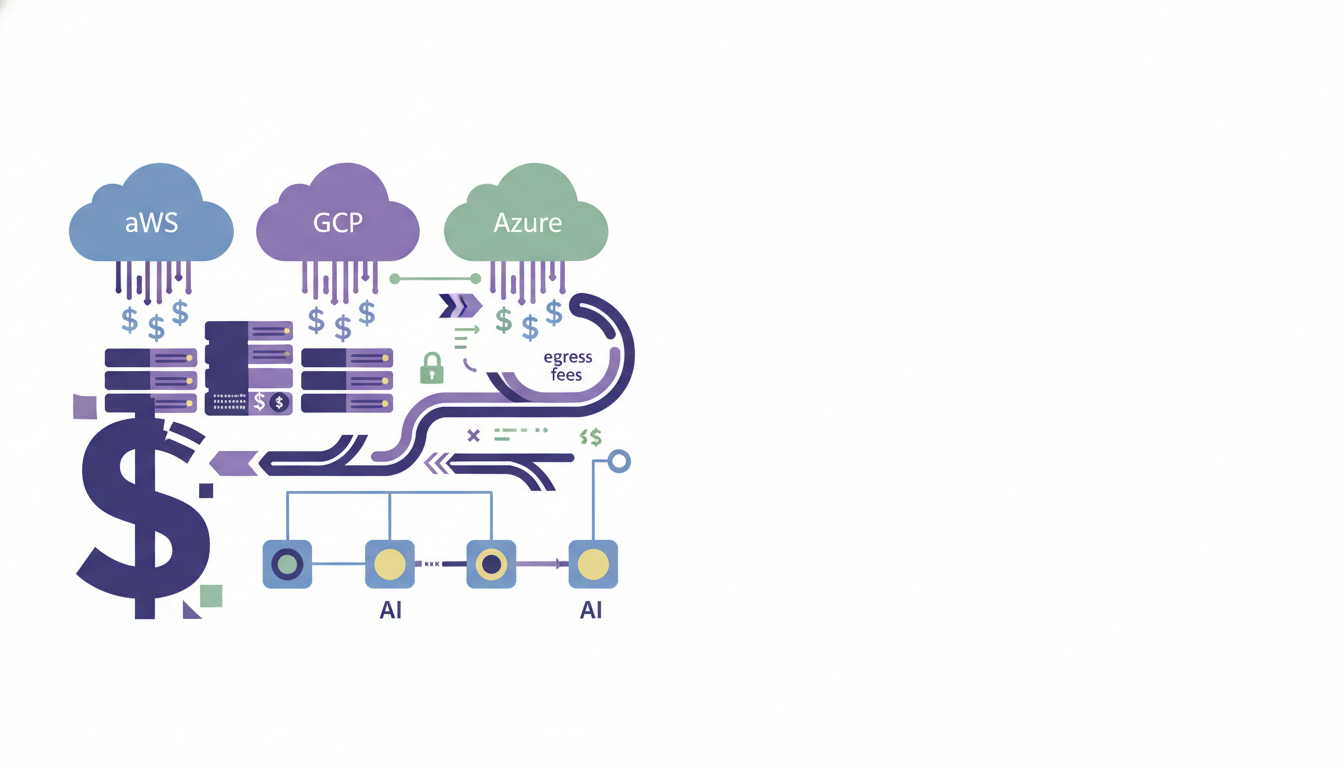

Moving training data and model states between clouds involves massive data transfers that most pricing calculators ignore. Cross-cloud data egress fees (AWS to Azure synchronization for disaster recovery) accounting for 18% of total cloud spend.

Effective cross-cloud AI training requires:

Parallel data staging: Start moving your next epoch's data before you need it. Use local SSD for data staging: Loading training data from local SSD is faster and reduces the impact of preemption on data pipelines.

Compression optimization: Model weights and gradients compress differently than typical data. Purpose-built compression can reduce transfer times by 40-60% without losing precision.

Incremental synchronization: Only move what changed since the last checkpoint. This works especially well with techniques like LoRA that modify small parameter subsets.

Real-Time Pricing Arbitrage: Making It Work

The real competition in 2026 is AI workload optimization — delivering the lowest total cost of ownership (TCO), highest tokens-per-dollar, and best performance-per-watt for training, fine-tuning, and especially inference. But pricing arbitrage algorithms need to account for migration costs, not just raw compute prices.

Effective pricing algorithms consider:

- Migration overhead: The cost of moving your current state to the cheaper provider

- Performance consistency: AWS pushes Trainium3 (training) and Inferentia2/3 (inference), claiming up to 40-70% lower cost than equivalent NVIDIA setups for compatible models. Google Cloud's TPU v5e/v6e/v7 Ironwood series delivers up to 4x better performance-per-dollar on transformer/LLM inference.

- Network latency: Cross-region or cross-cloud data synchronization adds training time

- Commitment conflicts: Reserved instance contracts that lock you into specific providers

The math gets complex quickly. A 30% cheaper GPU isn't worth migrating to if the data transfer takes six hours and costs $10,000.

Ensuring Training Continuity During Switches

The biggest fear with cross-cloud migration is losing training progress. Automated failover systems detect hardware failures and migrate training jobs to healthy nodes without data loss. But building this level of reliability requires several technical components working together:

Distributed coordination: Your training job needs to know which nodes are migrating and which aren't. Technologies like etcd or Consul help coordinate state across clouds, but they add complexity.

Graceful degradation: NVIDIA Run:ai includes a grace period mechanism for standard and distributed training workloads. This configurable delay allows workloads time to finish a checkpoint before being forcibly stopped.

Health monitoring: Real-time detection of performance degradation that might indicate you should migrate before a failure occurs. In production, the system has reduced unplanned downtime by 65% across our A100 and H100 clusters. When a failure is predicted, the system automatically migrates training jobs to healthy GPUs and triggers a maintenance request.

The Multi-Cloud Reality Check

Instead of weeks on a single GPU, distributed training can reduce workloads to days or even hours, provided the infrastructure supports: Fast GPU-to-GPU communication (NVLink or InfiniBand), High-throughput storage for checkpointing and datasets, Stable, low-latency networking across nodes. Cross-cloud training adds another layer of complexity to this already demanding setup.

The technical reality is that true cross-cloud AI training isn't just about having APIs that work across providers. It requires purpose-built orchestration systems, intelligent data placement, and fault-tolerant architectures that most organizations aren't prepared to build themselves.

Enterprises adopting it primarily for resilience or compliance must budget for 20–35% higher operational costs than single-cloud deployments. But for organizations training models that cost millions and can't afford provider lock-in, these complexities are worth solving.

The future belongs to platforms that make cross-cloud AI training as reliable as single-cloud training, but with the flexibility to optimize for cost, performance, and availability across any provider.

Ready to eliminate the 30-50% waste in your AI training costs through intelligent cross-cloud orchestration? Learn more about CloudShift AI and see how we make cross-cloud training migrations seamless and cost-effective.

AI/ML Training Infrastructure Management

CloudShift AI is an intelligent cross-cloud training orchestration platform designed for AI-first startups and large tec

Discover what we're building

Learn more about AI/ML Training Infrastructure Management and get started today.

Visit AI/ML Training Infrastructure Management